The Survival of Systems Against Entropy

The shortage of natural resources and the destruction of the natural environment are two colossal problems that are now threatening our existence. These seemingly two problems are just one problem ― the problem of entropy. On this page, I will show that the essence of the two problems is entropy and that the key to resolving them consists in how to decrease entropy. So, first, let me explain what entropy is.

1. The Definition of Entropy

We often say, “Save energy.” But, the first law of thermodynamics tells us that the amount of energy is constant and cannot diminish or vanish, while our consumption of energy transforms useful energy into useless energy. How to get useful energy is the problem of resources and how to decrease or dispose of useless energy is the problem of the environment. To understand the essence of these problems, you must know what this “transformation” is.

1.1. Clausius’s Definition

In the 1850s[1], Rudolf Clausius, a German physicist, coined a term “entropy” from a Greek word “τρεπειν”, which means “transformation”, prefixing “en” to make it similar to the word “energy”[2]. Entropy increases when useful energy gets useless. Its symbol is S which it is a quantitative measure. When a system absorbs heat Q and the temperature of the system remains at T, its entropy increases by

For example, when at the pressure of 1 atmosphere 336J heat melts 1g ice completely, whose temperature is constant at 0°C=273K, the entropy increases by

Entropy never decreases in an isolated system, i.e. a system that cannot exchange matter, heat, work, or information with its environment. This is the second law of thermodynamics. The universe we live in is an isolated system, where entropy increases over time, approaching a maximum value, the heat death.

A common-sense version of the second law of thermodynamics is: Heat cannot of itself pass from a colder to a hotter system. Suppose there are two systems in an isolated system and a very small quantity of heat q flows from the hotter system S1 (temperature=T1) to the colder system S2 (temperature=T2).

As q is tiny, you can assume both T1 and T2 do not change.

The increase in entropy of S1=-q/T1

The increase in entropy of S2=+q/T2

The total increase of the entropy in the isolated system is positive because T1 is bigger than T2 and q/T2 is bigger than q/T1.

This formula shows that entropy always increases. If heat were to pass by itself from a colder to a hotter system, the entropy of an isolated system could decrease, which never happens.

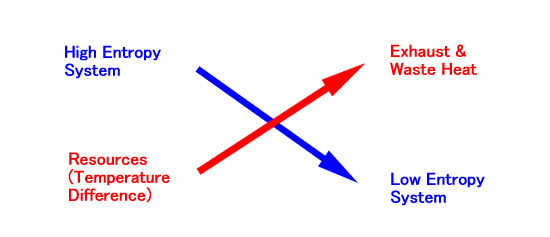

The entropy of a non-isolated system can decrease. In fact, the entropy of S1 decreases at the cost of increasing more entropy of S2. Therefore, the second law of thermodynamics reads: In order to decrease the entropy of a non-isolated system, you must increase more entropy in its environment. I will schematize the compensation relationship as follows:

The top/bottom relation corresponds to the higher/lower of entropy, while the left/right corresponds to before/after the reaction. This crossing scheme indicates that the transformation of resources into the exhaust or waste heat enables the system to reduce its entropy. This is the fundamental principle of maintaining life and civilization. To grasp the essence of life and civilization in terms of entropy, however, we should generalize the concept of entropy.

1.2. Boltzmann’s Definition

In 1877, Ludwig Boltzmann, an Austrian physicist, interpreted the concept of entropy statistically[3]. He found that the entropy is proportional to the logarithm of the number of microstates that ideal gas particles could occupy. Let me show why such an interpretation is possible.

Suppose a certain volume of gas expands with heat Q from V1 to V2, with its temperature T and pressure P unchanged. The energy of Q is equal to the work of the expansion.

The ideal gas equation is

where

n = number of moles

N = number of molecules

R = universal gas constant = 8.31 [J/mol K]

k = Boltzmann constant = Rn/N = R/Avogadro’s number (6.02×1023) =1.38×10-23 [J/K].

From the last two equations, entropy increases by

The last formula klnW is Boltzmann’s definition of entropy. Here W is the increased number of microscopic configurations.

A concrete example might help you see what W means. Suppose a certain volume of gas containing 100 molecules absorbs heat and the volume has doubled, that is to say, (V2/V1)=2 and N=100.

If we virtually divide the room of the gas above into 100 rooms, we have only one way to locate molecules. When the number of rooms has doubled, we can locate each molecule in either of the two rooms. So, there can be W= 2100 configurations. In this case, entropy increases by kln2100, about 69.3k.

Boltzmann’s definition enables you to apply the concept of entropy and its law to non-thermodynamic phenomena. Since the name “the second law of thermodynamics” is inappropriate in that case, I would like to use a more comprehensive name “the law of entropy”.

A drop of red ink into a tank makes water pink but the reverse never happens. If the temperature of the ink is equal to that of the water, this is not the increase of entropy in the thermodynamic sense. The law of entropy still holds good for the spread of the red ink molecules, for the uncertainty of the molecules’ distribution irreversibly increases.

The non-thermal entropy can also decrease at the cost of increasing more entropy in the environment. Wipe something with a dust cloth and you can remove dust from it at the cost of smudging the dustcloth. Wash the dustcloth and you can remove dust from it at the cost of contaminating water, and so on. This compensation itself is of course not completely non-thermal work because the human work for this cleaning emits waste heat.

Based on Boltzmann’s definition, some textbooks often regard entropy as a measurement of disorder, but this expression “disorder” is rather vague. As a synonym of entropy, “uncertainty” is more desirable. It is the concept of the theory of probability, and this definition enables us to apply the concept of entropy to information theory.

1.3. Shannon’s Definition

Now, the next step to expand the definition of entropy is to apply the concept to non-material phenomena. In his 1948 paper, Claude E. Shannon defined the concept of entropy in terms of information theory[4]. His comprehensive definition of entropy is

where p(i) is the probability of i and K is an arbitrary constant. In information theory, K is 1 and the base of the logarithm is 2 (binary logarithm), while K is Boltzmann’s constant and the base of the logarithm is e (natural logarithm) in physics.

Let’s apply Shannon’s formula to a simple example: rolling a die makes uncertain what pip comes out, and thus increases entropy by

When a pip comes out, the entropy decreases to zero. The value of information is equal to the entropy it reduces. So, you can say the information value of the result is about 2.6.

Some claim that the information entropy differs from the thermodynamic entropy because K is different. But it is merely a matter of choosing measurement units. Just as the number of molecules or atoms in visible materials is too large to count without the unit of mole, the logarithm of the number of microscopic configurations is too large to describe without multiplying it by Boltzmann’s constant.

To use the familiar term of systems theory, I will name “W=1/p(i)” complexity. Complexity is the degree of uncertainty or the number of possible cases. The original meaning of “complex” is “folded together”. Therefore, you can define “complexity” as “possible cases folded together”. The possible cases of combination exponentially increase, as combined elements increase. In this sense, the ordinary definition of complexity as the number of elements is not irrelevant to mine.

The more you experience and think, the more elements you find and the more complex your possible worlds become. The information entropy increases with the lapse of time. But, strictly speaking, the law of entropy cannot apply to information systems themselves, for they are not the isolated systems. If you forget or die, the accumulated possible worlds will disappear.

Because information systems are open systems, the law of entropy is valid for the relation between them and their environment. Computers need electricity and humans need food to process information. This means that you must increase more material entropy in the environment to decrease information entropy.

The law of entropy is valid for the non-material systems and their non-material environments. A word makes sense by negating the other candidates within its horizon. The color “red” is the color that is not blue, green, white, black, and so on. As I have pointed out, “The value of information is equal to the entropy it reduces.” Within the horizon “color” the concept of red reduces the information entropy. The same law applies to the truth of theories. The more opposing hypotheses a theory refutes, the more valuable it becomes. While the differential nature of information systems shows meaningfulness, the truth consists in low entropy.

In this chapter, I clarified the concept of entropy. Entropy is the logarithm of complexity, a measurement of uncertainty. In the next chapter, I will explain what a system is in relation to entropy.

2. The Definition of Systems

The word “system” stems from a Greek word “σύστημα” and its function is “συνιστάναι”, namely “combine”. A system combines its elements, thus selecting a method of combination and excluding the other real or possible methods of combination as its environments.

The environment is not the mere spatial outside of a system. What makes a system different from its environment is the selection through which the system maintains its own entropy at a low level. In other words, the boundary between a system and its environment disappears when entropy goes on increasing.

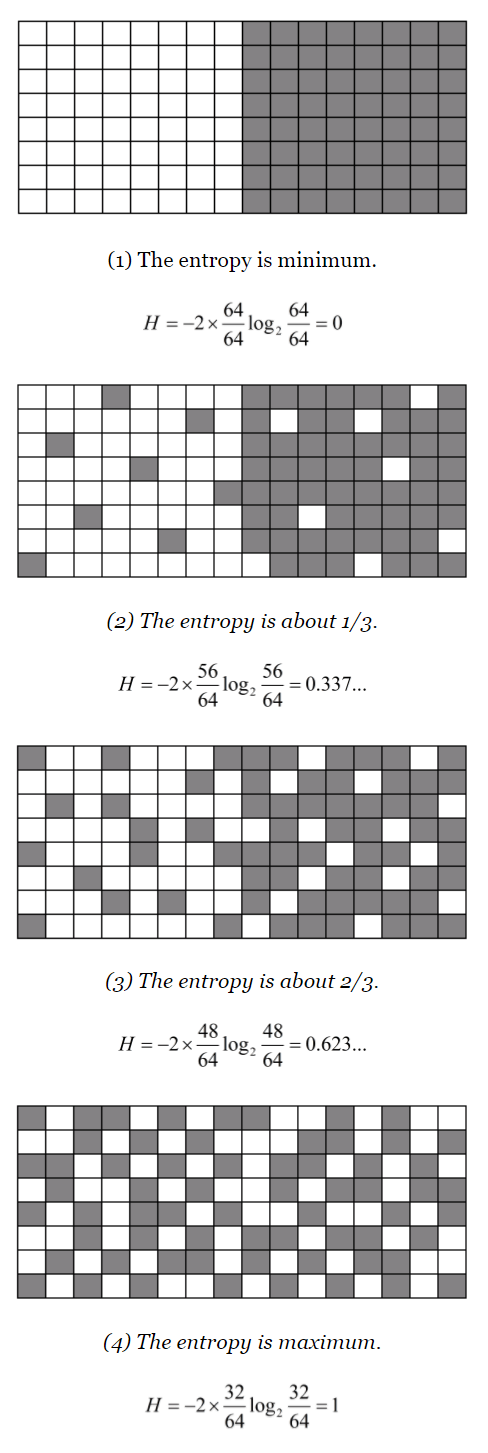

Let me illustrate it. In the first of the figures below the left white system neighbors the right gray system, which corresponds to the environment of the left one and vice versa. The boundary between them is distinct when the entropy is zero, for both systems select their elements in their determinate ways. As entropy increases, the boundary becomes blurring. The fourth of the figures below shows the distinction between the system and the environment completely disappears when the entropy reaches the maximum.

Some systems, for example, a piece of ice melting in hot water, do not resist its entropy increasing so that the boundary disappears finally, while others, typically living organisms, are so programmed as to throw their entropy into the environment to maintain their low-entropy structures. Some living systems have consciousness and reduce information entropy, taking part in intersubjective information systems, namely social systems. We are, of course, such systems. In this chapter, I will examine the structure of these systems, namely, living, conscious, and social systems.

2.1. Living Systems

We can classify the biological definition of life into the functional one and the material one. The former definition lays stress on such physiological aspects as metabolism, response to stimuli, adaptation to the environment, growth, and reproduction, while the latter on such a material aspect as the protein-based cell structure. What feature is the most essential?

Biologists usually consider it essential that living organisms should contain protein and comprise cells. But are these conditions essential? The virus comprises nucleic acid molecules with a protein coat and has no cell, but behaves like life. Tierra, artificial life in computers, includes no protein but also behaves like life. There may be creatures on an unknown planet that are made of silicon or germanium and are otherwise very similar to living organisms on the Earth. Silicon and germanium, which are the material of semiconductors of computers, cause a chemical reaction similar to that of carbon. Does it make sense to regard the new species as lifeless just because it includes no cells or protein?

The functional features are more important than the material. The most important and basic of the features mentioned above is metabolism. Metabolism includes the biosynthesis of complex organic molecules (anabolism) and their breakdown (catabolism). I will take up the most famous anabolism, photosynthesis, as an example.

The reaction formula of photosynthesis is 6CO2 + 6H2O → C6H12O6 + 6O2. Because the entropy after the reaction is lower than that before it, some used to say the photosynthesis was the exception to the law of entropy, but of course it is not. Plants can synthesize the low-entropy resources, glucose (C6H12O6), in exchange for throwing more entropy into the environment. The sunlight heats water that plants absorb from the ground through roots and the plants transpire the water through stomata into the air. From this point of view, we can schematize the reaction as follows:

The entropy before the reaction is 1951 J/K per 1 mol carbon dioxide, while that after it is 4562 J/(mol K). The total entropy increases[5].

Catabolism is not different from anabolism in that it decreases entropy in exchange for increasing more entropy in the environment. The aerobic respiration by plants and animals breaks down glucose into high-entropy carbon dioxide and water, while this increase in entropy converts ADP (adenosine diphosphate) and Pi to ATP (adenosine triphosphate).

We often call ATP the “molecular currency” of intracellular energy transfer because cells can exchange ATP for useful energy at any time. They spent the currency to maintain the structure of the living organism.

It was Erwin Schrödinger who first noticed the essence of life has something to do with entropy. He thought a living system could live by eating “negative entropy” and storing it[6]. Later Léon Brillouin abbreviated the term to “negentropy”[7]. But importing negative entropy (negentropy) from the environment is just another mode of expression of exporting positive entropy to the environment. Therefore, the term “negentropy” is equivalent to “throwing entropy in the environment.”

The other functional features of life that I mentioned above (namely, response to stimuli, adaptation to the environment, growth, and reproduction) are all just means to achieve the ultimate purpose: maintaining its low-entropy structure.

Let’s examine the first two features, response to stimuli and adaptation to the environment. We often say, “We must change to adapt to environmental changes.” But this expression is misleading. We should say, “We must change in order not to change despite the environmental change.” For example, we respond to the temperature change and try to adapt to it. When it gets hot, we sweat. When it gets cold, we shiver. Both sweating and shivering are the means to keep the body temperature constant. Their purpose is to maintain the information entropy low[8].

Living organisms maintain their entropy at a low level in various ways. For example, the sodium-potassium pumps of cells pump sodium ions out and potassium ions in through ion channels in the plasma membrane to keep the different concentrations of two ions inside and outside them. This active transport maintains the cell potential.

So are the remaining two features: growth and reproduction. For an asexual life, there is no essential difference between them and their purpose is to prevent the annihilation of their low-entropy structures. But if the structures are uniform, the risk of invaders, such as viruses, annihilating all the individuals is still high. The function of sexual reproduction is to diversify individuals and increase the information entropy for invaders so as not to exterminate the entire species[9].

Maintaining its own entropy at a low level is not sufficient as the definiens of life. A room with an air conditioner can keep its temperature low, that is to say, maintain its entropy at a low level. But, unlike a living organism, it does not design itself or repair itself. Therefore, you can say the essence of life lies in the spontaneous reduction of its entropy programmed by genetic information.

2.2. Conscious Systems

Living organisms are information systems, but not all information systems have consciousness like us. What criterion should we apply for judging whether a certain system has consciousness or not? I would like to suggest a criterion: whether it wavers in its selection or not.

We often waver in deciding the menu of a meal, but never in deciding whether to secrete gastric juices from our stomachs to digest food. That’s why we are conscious of the menu of a meal, but not of the secretion of gastric juices.

From this, we can guess that insects have no consciousness because they are slaves of instinct. If there is no uncertainty in the connection between input and output, consciousness is an unnecessary luxury. We can virtually experience the life of insects during dreamless sleep when we lose consciousness, while the bodies continue metabolism and the brains continue reflex actions.

The agent with no freedom for selection has no consciousness. But this does not mean that every agent whose selection is uncertain has consciousness. Imagine you make a robot whose decision depends on quantum uncertainty. Its action is random and unpredictable. The robot is at the mercy of contingency and does not hesitate over which candidate to choose. Therefore, it cannot have consciousness.

Uncertainty is the state that can be otherwise than it is and the accidental agency that is not in two minds does not internalize this otherness. Consciousness is the differentiated self-identity that internalizes the otherness. That’s why the representative world that a conscious system has is differential.

Let me illustrate this philosophical thesis more concretely. Suppose you cannot decide between evolutionism and creationism. To decrease this information entropy and maintain you as an information system, you must select either as correct. Selecting evolutionism makes you evolutionist and throws other possibilities into your environment. Some would select creationism and they become the others to you.

The other is not a mere person physically separated from you. You do not regard your faithful subordinates, for example, as others. Just as your arm, which moves as you order, is part of your body and not the other’s arm, your faithful subordinates, who move as you order, are not others but part of an organization as an enlarged body.

The other is an agent who can select otherwise than I select. It is not until the faithful subordinates show a sign of betrayal that they can be others. We can find a similar phenomenon in a personal body. When we have our corpus callosum that bridges both hemispheric brains cut off, our mind separates into two, each of which moves its parts of bodies under control.

Modern philosophers tried to prove the existence of alter ego, but this trial was comical. To prove something is to show that it is necessary and has no other possibility, namely to delete otherness. You do not have to prove anything. You just reflect on yourself. The ego without an alter ego is as absurd as consciousness that is never in two minds about selection. The very fact that you have consciousness and often vacillate indicates that you have an alter ego.

In this sense, the intersubjective uncertainty is reflected in the intrasubjective uncertainty. Individual conscious systems reduce their intrasubjective entropy, but this reduction often increases the intersubjective entropy: anarchic disorder. It is social systems that reduce this entropy.

2.3. Social Systems

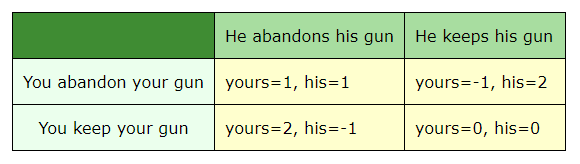

Imagine you encounter a stranger in an anarchic state. Both point their guns at each other and say, “Throw your gun first and I will throw mine.” How is it possible? In order for you to throw your gun, the stranger must throw his gun first and in order for him to throw his gun, you must throw your gun first…

It is best for both to abandon the weapons and coexist peacefully. But there is no guarantee that the stranger will throw away his gun as he promised, even if I throw away mine. He might monopolize the weapons and enslave me.

The combination of payoff in possible four cases is as follows:

My reasoning is “Whether the opponent will abandon or keep his gun is uncertain. But in either case, my payoff will be bigger, if I keep my gun. So, I should keep my gun.” But, because this is also his reasoning, neither of the two can abandon his weapon.

Here, each selection indeterminately depends on that of the other. Talcott Parsons named such indeterminacy of interaction “double contingency”[10]. He formulated the problem of double contingency as follows.

There is a double contingency inherent in interaction. On the one hand, ego’s gratifications are contingent on his selection among available alternatives. But in turn, alter’s reaction will be contingent on ego’s selection and will result from a complementary selection on alter’s part. Because of this double contingency, communication, which is the preoccupation of cultural patterns, could not exist without both generalization from the particularity of the specific situations (which are never identical for ego and alter) and stability of meaning which can only be assured by »conventions« observed by both parties.[11]

Later Parsons analyzed interaction, the premise of double contingency as follows.

The crucial reference points for analyzing interaction are two: (1) That each actor is both acting agent and object of orientation both to himself and to the others; and (2) that, as acting agent orients to himself and to others, in all of primary modes of aspects. The actor is knower and object of cognition, utilizer of instrumental means and himself a means, emotionally attached to others and an object of attachment, evaluator and object of evaluation, interpreter of symbols and himself a symbol.[12]

The double contingent interaction faces the so-called prisoners’ dilemma. The Soviet Union and the US in the Cold War era, both of which hesitated to reduce the nuclear weapons, fell into the same dilemma.

According to the game theory, the strategy that maximizes the minimum of my payoff, whatever strategy the other player(s) may adopt, is the optimal response. We call the combination of strategy optimal for any player Nash Equilibrium. The paradox of the prisoners’ dilemma consists in that the best judgment sometimes induces each player to select the Nash Equilibrium, although all players know it is not the best, as the first example illustrates.

What then should the two of you do to extricate yourselves from the anarchy and secure your lives? You cannot get out of the dilemma of double contingency by yourselves. Parsons thought a consensus based on the symbolic medium of exchange could solve the problem of double contingency, just as money could solve the inconvenience of barter. As W.S. Jevons pointed out, there must be a double coincidence of wants to enable barter.

A hunter having returned from a successful chase has plenty of game, and may want arms and ammunition to renew the chase. But those who have arms may happen to be well supplied with game, so that no direct exchange is possible. In civilized society the owner of a house may find it unsuitable, and may have his eye upon another house exactly fitted to his needs. But even if the owner of this second house wishes to part with it at all, it is exceedingly unlikely that he will exactly reciprocate the feelings of the first owner, and wish to barter houses. Sellers and purchasers can only be made to fit by the use of some commodity, some marchandise banale, as the French call it, which all are willing to receive for a time, so that what is obtained by sale in one case, may be used in purchase in another. This common commodity is called a medium of exchange, because it forms a third or intermediate term in all acts of commerce.[13]

Parsons added the adjective “symbolic” to the “medium of exchange”. He regarded money as symbolic because it stands for economic value or utility without possessing utility in itself in the primary consumption sense[14].

Parsons applied his AGIL scheme (A is for Adaptation, G is for Goal attainment, I is for Integration, L is for Latency) to the symbolic medium of exchange so that A’s medium is money, G’s is power, I’s is influence, and L’s is commitment, but it is unclear how power, influence and commitment function like money as a medium of exchange, a unit of account and a store of value.

Parsons was not the first to generalize the function of money as the medium of exchange. At the beginning of the 20th century, Georg Simmel expanded the concept of exchange into that of social interaction in general, though interaction has a broader meaning than exchange.

Each interaction, for example, conversation, love (even when different feelings reciprocate it), play, glance at each other, however, is to be regarded as an exchange. There seems to be a difference in that in the interaction you give what you yourself do not have, while in exchange you give only what you have. But the difference does not hold good, because firstly all you exercise in the interaction can always be your own energy, the devotion of your own substance; and conversely the exchange concerns not the object that the other previously had, but your own reflection of feeling that the other did not previously have; because the meaning of the exchange consists in that the sum of value after the exchange is more than that before it, that is to say, each gives more to others than he possessed.[15]

In the interaction of confessing one’s love to each other, for example, the confession does not reduce one’s love. In the economic exchange, however, one must abandon one’s money or merchandise to get what one wants. The two interactions seem to be different at this point, but in both cases, one can mutually increase one’s satisfaction. Simmel included unreturned love in love as an exchange, but he should have excluded it because it is a failed exchange.

Besides Simmel, G. H. Mead[16], Kenneth Burke[17] and others noticed the similarity between exchange and communication, but it was Parsons that influenced Luhmann.

Luhmann’s criticisms on Parson’s theory of double contingency and symbolic medium of exchange are as follows[18].

- Parsons regarded the contingency merely as the dependency (Abhängigkeit), not as the uncertainty, a mode between the necessity and the impossibility or the state that can be otherwise (Auch-anders-möglich-Sein).

- Parsons confined media to those for exchange, but what is important to solve the double contingency problem is not mere exchange media but communication media.

- Parsons confused the code with the message. The code is not a value or series of symbols but binary disjunction such as yes/no, true/false, right/wrong, beautiful/ugly, and so on.

- Parsons adhered to deriving the media from evolutionary differentiation, but we must give up deriving them in terms of universal systems theory and reconsider the function of media in social systems.

According to Luhmann, the function of media is to transform the improbable (Unwahrscheinliches) into the probable (Wahrscheinliches). There are three sorts of media, language (Sprache), distribution media (Verbreitungsmedien) and symbolically generalized communication media (symbolisch generalizierte Kommunikationsmedien).

Language is the medium that raises the understanding of communication over the perceivable. The distribution media are such media as print and broadcast that broaden the reach of language. The symbolically generalized communication media are media that not only transmit information but also endorse the success of communication.

Important examples are: truth, love, property/money, power/right, in the beginning also religious belief and culture, today perhaps “basic value” standardized by civilization.[19]

Though Luhmann distinguished code from message or media from forms[20], he seems to have confused them. Truth is the form expressed by language as a medium and the property is the form evaluated by money as a medium. Love, power, rights, etc. are not media.

As Luhmann noted, there is fundamentally only one kind of medium, language. Here I use this term in a wider sense so that any symbol, sign, token, or signal is language. Other media, for example, money, are just special forms of language. So, first I will analyze the functions of languages.

The most fundamental function of language is abstraction. The following three functions of language derive from this:

- Representation: Just as representatives abstract common public opinion from that of their constituency, language abstracts a common attribute from various referents. Language is a representative of reality in this meaning. Since the cerebral capability to process information is limited, language must throw away most information and gather only the intersubjectively relevant information. The private experience can be other than that described by language, but language reduces this entropy.

- The medium of exchange: Language abstracts intersubjectively recognizable attributes from what is personally experienced and thought here and now. The abstraction makes the language abstract and universal enough to serve as a medium of opinion exchange. If there are N persons, there are N(N-1)/2 relations and there can be so many means of communication. The common language reduces this entropy by log N(N-1)/2.

- Record of the past: Spoken languages can represent and convey the present experience and thought, but to record and conserve the past experience and thought, written languages are necessary. We can read ‘’Epic of Gilgamesh’’ which was written approximately 4500 years ago. The written languages can reduce the uncertainty increased by oblivion. But, of course, time increases entropy. We cannot read the Indus script.

These three functions correspond to the following three functions of money:

- Unit of account: Money abstracts the economic value from commodities (goods or services) and represents it so that it can function as a standard numerical unit of value measurement. The subjective valuation can be other than that evaluated by the market, but money reduces this entropy.

- The medium of exchange: Money functions as an intermediary to avoid the inconveniences of a pure barter system. If there are N commodities, there are N(N-1)/2 relations and there can be so many ratios of exchange. The money reduces this entropy by log N(N-1)/2.

- Store of value: You can store the value of the sold commodities in money and retrieve it when you buy what you want. Money is a means of deferred payment, which enables you to owe a debt. Entropy increases as time passes. The future is uncertain. That’s why interest must compensate for the increase in entropy.

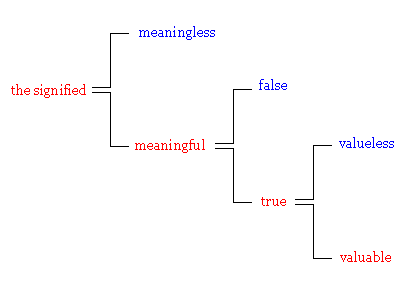

You might think money is parallel to language, but as I first noted, money is a special form of language. Language sorts out the meaningful from the meaningless, truth from falsehood, and the valuable from the valueless. On the other hand, money is engaged only in the last selection.

Money represents the positive value, while punishment the negative value. Language is the media of cultural systems, money is the media of economic systems and punishment is the media of political systems. Punishment has these three functions as a communication medium:

- Measurement of criminality: Punishment abstracts illegality from criminal acts. The amount of fine and the length of imprisonment represents how severe a crime is. Like money, it functions as a standard numerical unit of severity measurement. The subjective assessment can be other than that judged by the public authority, but punishment reduces this entropy.

- The medium of exchange: We must exchange crime for punishment. The primitive form of the exchange is revenge. Like barter, revenge lacks intersubjective validity and institutionalized stability. The public authority must mediate this exchange and reduce the entropy increased by “war of all against all”.

- Deferred payment: It is ideal but practically difficult to punish a crime as soon as they commit it. It usually takes time to arrest criminals and adjudicate them. In other words, punishment can preserve the negative value of crimes and exchange it for punishment, overcoming the lapse of time. However, it cannot overcome it completely. The statute of limitations can run out after a long period.

Money, language, and punishment are the major media of value exchange that decrease social entropy. Economic wealth, cultural honor, and political authority represented by these three media are three main sources of power.

Today the United States of America virtually mediates international relations since the United States dollar, English language, and the United States military function as the international communication media.

- The United States dollar (USD) is the standard unit of currency and the exchange medium in international markets. We evaluate the other currencies in terms of USD exchange rates. Some countries peg their currencies to USD and some even use the USD as their official currencies. The USD is also the world’s foremost reserve currency.

- English is today’s lingua franca. Though English is not an official language in many countries, it is the language most often taught as a second language around the world. There are thousands of languages in the world, but, to communicate with each other, we only have to learn English instead of learning thousands of languages. It is also a promising medium for recording. Someone will easily decipher English documents in the distant future, as we do Latin ones today.

- The military of the United States acts as if it were the world’s police officer. They project globally and intervene in regional wars all over the world away from their home territory. The military expenditure of the US accounts for 48% of the world total in 2005[21]. This force is the basis of Pax Americana. The UN and many other international organizations are under the influence of the US.

The world today has become more and more global and borderless. Humans are now forming one social system. Our global social system should solve the global problem of the environment.

3. The Strategy of Survival

Living systems, whether they are physiological, conscious, or social ones, consist in their low-entropy structures against the high-entropy environment. To maintain their entropy low, they must increase the entropy of resources and throw the high-entropy waste into the environment. In this chapter, I will show that we are systems of negentropy, by negentropy, for negentropy.

3.1. The Purpose of Survival

Philosophers have long asked a question, “What am I living for?” I was also wondering about this question and I finally reached the conclusion that I am living for the sake of living itself.

Most of our activities are the means to preliminary purposes, which in turn are the means to higher purposes. The ultimate end of this chain is to secure and increase our life. Respecting others’ lives is necessary to respect mine. If I injure another person, he or she will take revenge on me, based on the universality of language. On the other hand, even if I butcher cattle to eat, they will not take revenge on me, because they cannot communicate with me. The limit of language is the limit of morality and law.

The ultimate question is, then, why I should live. If I do not have to live, I will not have to abide by any moral laws and respect the other lives, because the maximum penalty is the death penalty. There can be people who feel their own existence of no importance. Such people will commit suicide and only those who want to live remain. The value of survival survives because it evaluates survival. The value of survival and the survival of the value reproduce each other. That is why we regard suicide as wicked.

You might think that we sometimes admire suicide, when, for example, a mother sacrifices for her child. Here, you just have to expand the denotation of “I”. Mothers usually regard their children not as others but as part of themselves. In an extreme case, the denotation of “I” expands into an entire nation. Encouraging Kamikaze, the suicidal attack, in wartime Japan is one such example. These exceptional phenomena do not refute that the ultimate purpose of a living system is to conserve itself.

Some are fond of adventure and rather pleased to run a risk. The law of entropy can explain this seemingly irrational inclination because the increase in entropy in the environment enables a system to reduce its entropy. The more various new experiences you gain, the more immune to change and thus the more able to survive you become. A venturesome spirit is not a disproof of the thesis: the ultimate purpose of life is to live.

3.2. The Value for Survival

Two factors that make something valuable are usefulness and scarcity. Air is useful but has no value because anyone can get it easily. Trash is valueless, even if it is unique and hard to get because it is useless. What do both requisites have in common, then?

Usefulness is the relevance to the realization of desired purposes, ultimately the survival of our living systems. It is the lowness of entropy in two senses, namely, the intrinsic resource value and the adaptability to the purpose. We must reduce the uncertainty of connection between a specific means and a specific purpose. Therefore, adopting a specific means to a specific purpose reduces information entropy.

Scarcity is the difficulty to gain commodities (goods and services) in great demand and short supply. The scarcer commodities are, the more uncertain the acquisition of them becomes and the higher the entropy becomes. Acquiring or producing them is a negation of the uncertainty.

There are two theories to interpret the economic value: the marginal utility theory and the labor theory. The former is the subjective theory of value and the latter the intrinsic theory of value.

The marginal utility is the extra utility per additional unit of consumption. When you are thirsty, a glass of water is valuable. Another glass of water is not so valuable. The third is still less valuable. When you do not want to drink anymore, the marginal utility decreases to zero. This is the law of diminishing marginal utilities. It is because the scarcity of the commodity decreases that the marginal utility diminishes as the supply goes on increasing.

Let’s consider the labor theory of value next. Apparently, labor creates value when it comes to commodities as a service. In the case of commodities as goods, what we actually pay for is not things but the labor of producing and transporting the things.

Before considering why labor can create value, let’s examine what is the difference between labor and play. A man that plays golf for pleasure might play as well as professionals. Why can the latter get paid for playing golf, while the former must pay for it?

When you play golf for pleasure, you do not have to win the game and you can quit playing whenever you lose interest in golf. Professionals cannot play so arbitrarily. Playing is richer in possibilities than labor. That is to say, the entropy of labor is lower than that of play. As labor reduces more entropy, it can produce more value.

This does not mean that play is always a mere waste of resources. From the viewpoint of entropy, there is little difference between consumption and production. You can describe both of them as an increase in entropy of the environment in compensation for reducing the entropy of a system.

For example, in order to produce metal, we must refine it, with oil burned. Although burning oil lowers the resource value of the oil, it refines ore and decreases its entropy. Making CD players of refined metal and selling them to consumers decreases not material entropy but information entropy. That is to say, they reduce the indeterminacy of listening to favorite music at a favorite place and time.

Such consumption as listening to music increases material entropy by using electricity and exhausting the CD player. But if you can get rid of stress by listening to music and refresh yourself, you can come to work well again. Since recreation as play re-creates labor, it is a kind of production.

Consumption can be significant or meaningless, just as the balance of production can be in black or red. This distinction is more important than that of production and consumption.

As the law of entropy shows, you must always increase the entropy of the environment to decrease the entropy of our system. But the reverse is not always true. An increase in entropy sometimes does not decrease the entropy of our systems. The mere increase in entropy is a waste. If you continue to waste resources in producing and consuming, your system cannot survive for a long period.

Our ultimate purpose is to maintain and develop our systems. Consumption directly and production indirectly contribute to maintaining and developing our systems. Since maintaining and developing our systems in the present is a means of maintaining and developing them in the future, the distinction between production and consumption is relative.

You might wonder, “If I cannot justify play without the reference to production, I must dedicate my entire life to realizing the value of the means. It will make my life empty. Don’t I have to regard consumption as the realization of end value, to avoid the regressus in infinitum of the means-end chain?” But it is not contradictory that individual happiness contributes to maintaining and developing social systems. Because this coincidence is rather normal, our systems have survived up to the present.

3.3. The Work as Survival

In the first chapter of this part, I wrote, “How to obtain useful energy is the problem of resources and how to decrease or dispose of useless energy is the problem of the environment.” Physicists think that useless energy is the energy that is inconvertible to work. To measure the potential of a system to do the maximum work, Zoran Rant coined a word “exergy”[22]. You can calculate exergy by the variables of internal energy (U), entropy (S) and volume (V) with the original temperature T0 and pressure before the work p0:

Exergy tells us the objective usefulness of resources, but not the subjective. For example, though the exergy of electricity is higher than that of heat, the electricity of lightning is useless or even harmful. Because we cannot order a desired bolt of lightning to strike the desired place at the desired time, that is to say, the information entropy of lightning is very high, the electricity of lightning is not a resource.

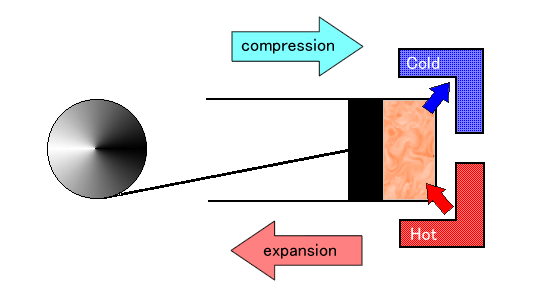

The useful work to which we convert energy must have low information entropy. The most popular way to control the work as we predict is to make it circulate. In fact, internal combustion engines operating in a cycle prevail in our civilization. The engine transfers energy from a high-temperature reservoir to a low-temperature reservoir. This process converts some of that energy to reciprocating and then circulating work. In 1824, Sadi Carnot (a French physicist and military engineer) developed an ideal model of internal combustion engines named later Carnot heat engine.

Here is a diagram of the Carnot heat engine.

The cycle of this engine consists of the following four steps:

- Isothermal Expansion: The gas in the cylinder absorbs heat from the high-temperature reservoir, which increases the entropy of the gas, but, as the gas expands to push the piston, the temperature of the gas remains constant.

- Adiabatic Expansion: The cylinder is thermally insulated from the high-temperature reservoir so that entropy remains constant. The gas continues to expand, which causes the gas to cool.

- Isothermal Compression: The heat flows from the gas in the cylinder into the low-temperature reservoir, which decreases the entropy of the gas, but, as the piston compresses the gas, the temperature of the gas remains constant.

- Adiabatic Compression: The cylinder is again thermally insulated from the low-temperature reservoir so that entropy remains constant. The piston continues to compress the gas, which causes the gas to warm.

At the last step, the gas comes back to the same state as the start of the first step, thus forming a cycle, called the Carnot cycle. The diagram below locates four steps in the Carnot cycle, where the direction of piston movement is horizontal and the direction of heat flow in and out the cylinder is vertical:

Reciprocating this circle produces the low-entropy work by increasing the entropy of fuel.

We cannot confine the Carnot cycle to the artificial engines. You can find the cycle also in the atmospheric circulation (air convection):

Burning fuel (wood, coal, oil) supplies heat to the Carnot heat engine, while solar radiation supplies heat to the Atmospheric Circulation. The circulation consists of the following four steps:

- Isothermal Expansion: Solar radiation heats the air on the Earth’s surface, which increases the entropy of the air. The air expands, becomes less dense, and ascends, with the temperature of the air constant.

- Adiabatic Expansion: The air is thermally insulated from the surroundings so that entropy remains constant. The air continues to expand, which causes the air to cool.

- Isothermal Compression: When the air rises aloft to the tropopause, the air radiates heat to the stratosphere, which decreases the entropy of the air. As the radiation compresses the air, it becomes denser and descends, with the temperature of the air constant.

- Adiabatic Compression: The air is again thermally insulated from the surroundings so that entropy remains constant. The air continues to be compressed, which causes the air to warm.

At the last step, the air comes back to the same state as the start of the first step, thus forming a cycle. The waste living systems discharge is convertible to heat. So long as the atmospheric circulation carries the waste heat to outer space, it keeps the entropy of our living space low and we do not have to worry about environmental problems.

The circulation of water is as important to living systems as that of air. The entropy of seawater is high in the sense that water mixes with salt, but solar radiation vaporizes and desalts seawater. As moist air rises, the adiabatic expansion cools air and water vapor begins to condense, forming clouds and then precipitation, so that water returns to the earth’s surface.

Ocean circulation is another important circulation of water. Besides the wind-driven horizontal circulation of surface water, there is a density-driven vertical circulation of the ocean water, called thermocline circulation. It circulates this way. The wind-driven surface currents in the Atlantic Ocean head polewards, get cold, salty, and dense, then flow downhill into the deep water basins at high latitudes, resurface in the Pacific Ocean and come back to the Atlantic Ocean.

The circulation of air and water on the earth functions like that of blood in our bodies. As the circulatory system helps cells to throw high-entropy waste out of the body, the circulation of air and water helps the living systems to throw high-entropy waste out of the Earth.

The Earth is to living organisms what a multicellular organism is to cells. The input of solar radiation and the output of waste heat enable the low-entropy structures on the Earth, while the input of food and the output of excrement enable the low-entropy structures of a living organism.

If we cannot eat anything or breathe in oxygen, that is to say, if we run short of the high-temperature reservoir, we will die. How to get food and oxygen is a resource problem. If we cannot drink water or excrete wastes, that is to say, we run short of the low-temperature reservoir, we will die. How to get water and dispose of wastes is an environmental problem. We will also die if the circulatory system stops. How should I name the problem of maintaining circulation? As the low-entropy works like circulation are not only the means but also the ends, we might name this the problem of life.

So, there are three thermodynamic problems for living systems to survive.

- How to get the high-temperature source

- How to get the low-temperature source

- How to get the low-entropy work from the former two

Based on these thermodynamic problems, there are three problems for information systems to survive.

- How to get the low-entropy resource from the environment

- How to throw the high-entropy waste into the environment

- How to maintain the low-entropy structure against the environment

These three correspond to three problems of resources, environment, and life.

4. References

- Carnot, Sadi. Reflections on the Motive Power of Fire: And Other Papers on the Second Law of Thermodynamics. Dover Publications; Illustrated edition (May 9, 2012).

- Carnot, Sadi. Réflexions sur la puissance motrice du feu et sur les machines propres à développer cette puissance. HardPress (May 1, 2019).

- Stephen R., Pauley, Laura L. Thermodynamics: Concepts and Applications, Turns. Cambridge University Press; 2nd edition (February 27, 2020).

- Lemons, Don S. A Student’s Guide to Entropy (Student’s Guides). Cambridge University Press; 1st edition (August 29, 2013).

- Ben-Naim, Arieh. ENTROPY: The Greatest Blunder in the History of science. Ben-Naim, Arieh.

- Palmer, Paul I. The Atmosphere: A Very Short Introduction. OUP Oxford; 1st edition (March 13, 2017).

- Turcotte, Donald L., Schubert, Gerald. Geodynamics. Cambridge University Press; 3rd edition (March 31, 2014).

- ↑It is not clear when Clausius first proposed the term. In his paper (1865) cited below, he said, “I proposed to name the magnitude S the entropy of the body…” in past tense.

- ↑Rudolf Clausius (1865) Über verschiedene für die Anwendung bequeme Formen der Hauptgleichungen der mechanischen Wärmetheorie, in Vierteljahrsschrift der Naturforschenden Gesellschaft in Zürich. 10, S.1-59.

- ↑Ludwig Boltzmann (1877) Über die Beziehung zwischen dem zweiten Hauptsatz der mechanischen Wärmetheorie und der Wahrscheinlichkeitsrechnung, respective den Sätzen über das Wärmegleichgewicht, in Wissenschaftlichen Abhandlungen 2, 164, pp. 373-435.

- ↑ Claude E. Shannon (1948) A mathematical theory of communication, in Bell System Technical Journal, vol. 27, pp. 379-423, pp. 623-656.

- ↑Atushi Tuchida (1992) Netugaku Gairon – Seimei Kankyo wo fukumu Kaihoukei no Netu Riron, Asakura Shoten. p.99.

- ↑Erwin Schrödinger (1943) Was ist Leben? – Die lebende Zelle mit den Augen des Physikers betrachtet.

- ↑Léon Brillouin (1951) Maxwell’s Demon Cannot Operate — Information and Entropy I, Journal of Applied Physics, 22.

- ↑Keeping the temperature of the body warmer than that of its environment means keeping the thermodynamic entropy of the body higher than that of the environment. But it also means keeping the information entropy of the body lower than that of the environment, as the fluctuation of bodily temperature is smaller than that of the environment.

- ↑This is the so-called Red Queen’s Hypothesis. See Leigh Van Valen (1973) A new evolutionary law, Evolutionary Theory, No 1. p.1-30.

- ↑Talcott Parsons (1964) The Social System, 2nd edition, The Free Press of Glencoe. p.36-43. Not all indeterminacy specific to social systems is the double contingency. For example, the industry is bound to the regulation of bureaucracy, the bureaucracy is bound to the politicians’ orders and the politicians are bound to the industry lobby. This tripartite dependent relation of decision-making is not a mere combination of double contingency. So, it might be better to use a more comprehensive term than double contingency, “multi-contingency”.

- ↑ Talcott Parsons, Edward Shils (1951) Toward a General Theory of Action, Cambridge, Massachusetts, Harvard University Press. p.16.

- ↑Talcott Parsons (1968) Interaction, International Encyclopedia of the Social Sciences, volume 7. p.436.

- ↑William Stanley Jevons (1875) Want of Coincidence in Barter, Money and the Mechanism of Exchange Chapter 1, D. Appleton and Company.

- ↑Talcott Parsons (1963) On the Concept of Political Power, Proceedings of the American Philosophical Society, 107. p.236.

- ↑“Jede Wechselwirkung aber ist als ein Tausch zu betrachten: jede Unterhaltung, jede Liebe (auch wo sie mit andersartigen Gefühlen erwidert wird), jedes Spiel, jedes Sichanblicken. Und wenn der Unterschied zu bestehen scheint, daß man in der Wechselwirkung gibt, was man selbst nicht hat, im Tausch aber nur, was man hat – so hält dies doch nicht stand. Denn einmal, was man in der Wechselwirkung ausübt, kann immer nur die eigene Energie, die Hingabe eigener Substanz sein; und umgekehrt, der Tausch geschieht nicht um den Gegenstand, den der andere vorher hatte, sondern um den eigenen Gefühlsreflex, den der andere vorher nicht hatte; denn der Sinn des Tausches: daß die Wertsumme des Nachher größer sei als die des Vorher – bedeutet doch, daß jeder dem anderen mehr gibt, als er selbst besessen hat.” Georg Simmel (1900) Philosophie des Geldes, Duncker & Humblot Siebente Auflage, Gesammelte Werke. p.32.

- ↑George Herbert Mead (1934) Mind, Self, and Society, University of Chicago Press.

- ↑Kenneth Burke (1945) A Grammar of Motives, University of California Press.

- ↑Niklas Luhmann (1975) Soziologische Aufklärung 2. Aufsätze zur Theorie der Gesellschaft, Westdeutscher Verlag. p.171-172.

- ↑“Wichitige Beispiele sind: Wahrheit, Liebe, Eigentum/Geld, Macht/Recht; in Ansätzen auch religiöser Glaube, Kunst und heute vielleicht zivilisatorisch standardisierte »Grundwerte«.” Niklas Luhmann (1987) Soziale Systeme – Grundriß einer allgemeinen Theorie, suhrkamp, S.222.

- ↑Niklas Luhmann (1990) Essays on Self Reference, Columbia UP. p.220.

- ↑Stålenheim, P., Fruchart, D., Omitoogun, W. and Perdomo, C. (2006) SIPRI Yearbook 2006, Oxford University Press. p. 300.

- ↑Zoran Rant (1956) Exergie, ein neues Wort für “technische Arbeitsfähigkeit”, Forschung auf dem Gebiete des Ingenieurwesens 22(1) : 36–37.

Discussion

New Comments

No comments yet. Be the first one!